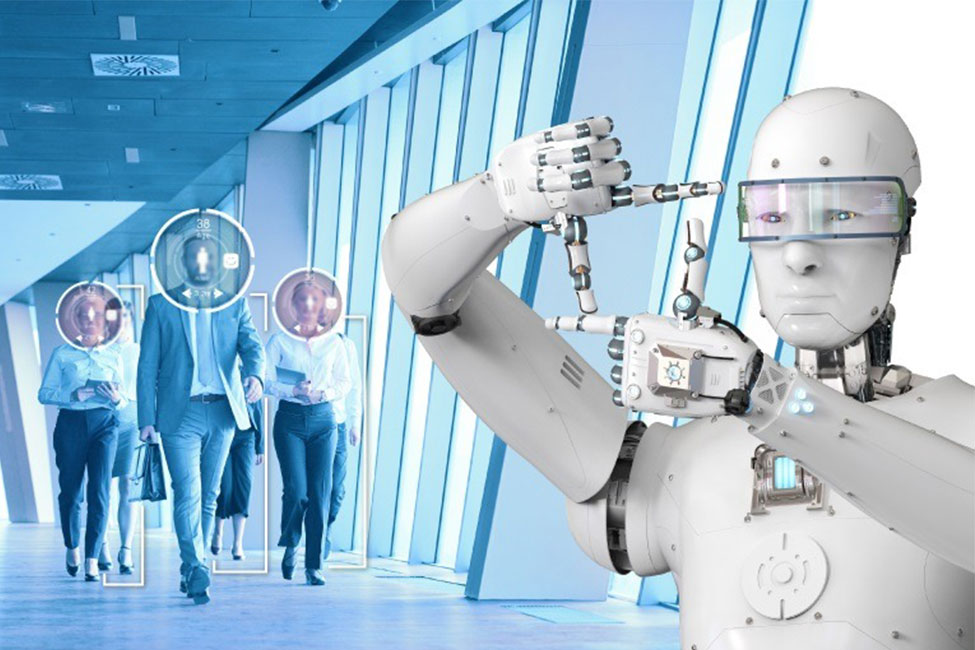

Amid widespread deployment of facial recognition systems for tightened security, a recent study of the US government showed that this AI (Artificial Intelligence)-based technology can actually produce erroneous results.

Researchers from the National Institute of Standards and Technology (NIST) found that almost 35 percent of the time, two algorithms assigned the wrong gender to black females.

Potential rates of “false positives,” in which an individual is mistakenly identified, were also shown in the study of dozens of facial recognition algorithms for non-whites, particularly Asian and African American. This means that the technology would confuse two people of the said race as much as 100 times higher than for whites.

The NIST also found “false negatives,” where the algorithm fails to exactly match a face to a specific person in a database.

“A false negative might be merely an inconvenience — you can’t get into your phone, but the issue can usually be remediated by a second attempt. But a false positive in a one-to-many search puts an incorrect match on a list of candidates that warrant further scrutiny,” said lead researcher Patrick Grother.

He furthered that the results clearly suggest that more equitable outcomes will only be obtained with more diverse training data.

Meanwhile, some activists and researchers including Jay Stanley of the American Civil Liberties Union said that these failures could lead to prolonged investigation and worse, the arrest of wrong, innocent people.

“One false match can lead to missed flights, lengthy interrogations, watchlist placements, tense police encounters, false arrests or worse. But the technology’s flaws are only one concern. Face recognition technology — accurate or not — can enable undetectable, persistent, and suspicionless surveillance on an unprecedented scale,” Stanley ended.